pixyz.losses (Loss API)¶

Loss¶

Negative expected value of log-likelihood (entropy)¶

CrossEntropy¶

-

class

pixyz.losses.CrossEntropy(p1, p2, input_var=None)[source]¶ Bases:

pixyz.losses.losses.LossCross entropy, a.k.a., the negative expected value of log-likelihood (Monte Carlo approximation).

![-\mathbb{E}_{q(x)}[\log p(x)] \approx -\frac{1}{L}\sum_{l=1}^L \log p(x_l),](_images/math/38ddb09b7cb885a22f4010a128786958799cba1e.png)

where

.

.-

loss_text¶

-

Entropy¶

-

class

pixyz.losses.Entropy(p1, input_var=None)[source]¶ Bases:

pixyz.losses.losses.LossEntropy (Monte Carlo approximation).

![-\mathbb{E}_{p(x)}[\log p(x)] \approx -\frac{1}{L}\sum_{l=1}^L \log p(x_l),](_images/math/339b559b8c1496eb8db139f6a53beb0b20c1e421.png)

where

.

.- Note:

- This class is a special case of the CrossEntropy class. You can get the same result with CrossEntropy.

-

loss_text¶

StochasticReconstructionLoss¶

-

class

pixyz.losses.StochasticReconstructionLoss(encoder, decoder, input_var=None)[source]¶ Bases:

pixyz.losses.losses.LossReconstruction Loss (Monte Carlo approximation).

![-\mathbb{E}_{q(z|x)}[\log p(x|z)] \approx -\frac{1}{L}\sum_{l=1}^L \log p(x|z_l),](_images/math/707fa4489d409200b3fbe6ded73cd394ad1aa235.png)

where

.

.- Note:

- This class is a special case of the CrossEntropy class. You can get the same result with CrossEntropy.

-

loss_text¶

LossExpectation¶

-

class

pixyz.losses.LossExpectation(p, loss, input_var=None)[source]¶ Bases:

pixyz.losses.losses.LossExpectation of a given loss function (Monte Carlo approximation).

![\mathbb{E}_{p(x)}[loss(x)] \approx \frac{1}{L}\sum_{l=1}^L loss(x_l),](_images/math/8587c5799d75b5a06f43acaa8d9b7bfbeca73161.png)

where

.

.-

loss_text¶

-

Negative log-likelihood¶

NLL¶

-

class

pixyz.losses.NLL(p, input_var=None)[source]¶ Bases:

pixyz.losses.losses.LossNegative log-likelihood.

-

loss_text¶

-

Lower bound¶

ELBO¶

-

class

pixyz.losses.ELBO(p, q, input_var=None)[source]¶ Bases:

pixyz.losses.losses.LossThe evidence lower bound (Monte Carlo approximation).

![\mathbb{E}_{q(z|x)}[\log \frac{p(x,z)}{q(z|x)}] \approx \frac{1}{L}\sum_{l=1}^L \log p(x, z_l),](_images/math/e2f9c94a8d8135a6c511f6fe7d231d8c6cac7a17.png)

where

.

.-

loss_text¶

-

Divergence¶

KullbackLeibler¶

-

class

pixyz.losses.KullbackLeibler(p1, p2, input_var=None, dim=None)[source]¶ Bases:

pixyz.losses.losses.LossKullback-Leibler divergence (analytical).

![D_{KL}[p||q] = \mathbb{E}_{p(x)}[\log \frac{p(x)}{q(x)}]](_images/math/7da88e47e8c39de2bec91f9449e75e1e56bfaeda.png)

- TODO: This class seems to be slightly slower than this previous implementation

- (perhaps because of set_distribution).

-

loss_text¶

Similarity¶

SimilarityLoss¶

-

class

pixyz.losses.SimilarityLoss(p1, p2, input_var=None, var=['z'], margin=0)[source]¶ Bases:

pixyz.losses.losses.LossLearning Modality-Invariant Representations for Speech and Images (Leidai et. al.)

MultiModalContrastivenessLoss¶

-

class

pixyz.losses.MultiModalContrastivenessLoss(p1, p2, input_var=None, margin=0.5)[source]¶ Bases:

pixyz.losses.losses.LossDisentangling by Partitioning: A Representation Learning Framework for Multimodal Sensory Data

Adversarial loss (GAN loss)¶

AdversarialJensenShannon¶

AdversarialKullbackLeibler¶

Loss for sequential distributions¶

IterativeLoss¶

-

class

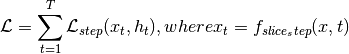

pixyz.losses.IterativeLoss(step_loss, max_iter=1, input_var=None, series_var=None, update_value={}, slice_step=None, timestep_var=['t'])[source]¶ Bases:

pixyz.losses.losses.LossIterative loss.

This class allows implementing an arbitrary model which requires iteration (e.g., auto-regressive models).

-

loss_text¶

-

Loss for special purpose¶

Parameter¶

-

class

pixyz.losses.losses.Parameter(input_var)[source]¶ Bases:

pixyz.losses.losses.Loss-

loss_text¶

-

Operators¶

LossOperator¶

LossSelfOperator¶

AddLoss¶

-

class

pixyz.losses.losses.AddLoss(loss1, loss2)[source]¶ Bases:

pixyz.losses.losses.LossOperator-

loss_text¶

-

SubLoss¶

-

class

pixyz.losses.losses.SubLoss(loss1, loss2)[source]¶ Bases:

pixyz.losses.losses.LossOperator-

loss_text¶

-

MulLoss¶

-

class

pixyz.losses.losses.MulLoss(loss1, loss2)[source]¶ Bases:

pixyz.losses.losses.LossOperator-

loss_text¶

-

DivLoss¶

-

class

pixyz.losses.losses.DivLoss(loss1, loss2)[source]¶ Bases:

pixyz.losses.losses.LossOperator-

loss_text¶

-

NegLoss¶

-

class

pixyz.losses.losses.NegLoss(loss1)[source]¶ Bases:

pixyz.losses.losses.LossSelfOperator-

loss_text¶

-

AbsLoss¶

-

class

pixyz.losses.losses.AbsLoss(loss1)[source]¶ Bases:

pixyz.losses.losses.LossSelfOperator-

loss_text¶

-

BatchMean¶

-

class

pixyz.losses.losses.BatchMean(loss1)[source]¶ Bases:

pixyz.losses.losses.LossSelfOperatorLoss averaged over batch data.

![\mathbb{E}_{p_{data}(x)}[\mathcal{L}(x)] \approx \frac{1}{N}\sum_{i=1}^N \mathcal{L}(x_i),](_images/math/bf9d0a7488a0627d93f1206e1cac8c049f816a51.png)

where

and

and  is a loss function.

is a loss function.-

loss_text¶

-

BatchSum¶

-

class

pixyz.losses.losses.BatchSum(loss1)[source]¶ Bases:

pixyz.losses.losses.LossSelfOperatorLoss summed over batch data.

where

and

and  is a loss function.

is a loss function.-

loss_text¶

-

![D_{JS}[p(x)||q(x)] \leq 2 \cdot D_{JS}[p(x)||q(x)] + 2 \log 2

= \mathbb{E}_{p(x)}[\log d^*(x)] + \mathbb{E}_{q(x)}[\log (1-d^*(x))],](_images/math/39deab6cf70e24d0d737baa91aff3d971db218b0.png)

![d^*(x) = \arg\max_{d} \mathbb{E}_{p(x)}[\log d(x)] + \mathbb{E}_{q(x)}[\log (1-d(x))]](_images/math/08bd6dc585dcdcb77beb4ac7120aba79a8e85298.png) .

.![D_{KL}[q(x)||p(x)] = \mathbb{E}_{q(x)}[\log \frac{q(x)}{p(x)}]

= \mathbb{E}_{q(x)}[\log \frac{d^*(x)}{1-d^*(x)}],](_images/math/c8976e83a61814386f59403647ec1fdbb78bd06a.png)

![W(p, q) = \sup_{||d||_{L} \leq 1} \mathbb{E}_{p(x)}[d(x)] - \mathbb{E}_{q(x)}[d(x)]](_images/math/76b508ff5d13d253e543ab112662841e28f09d32.png)